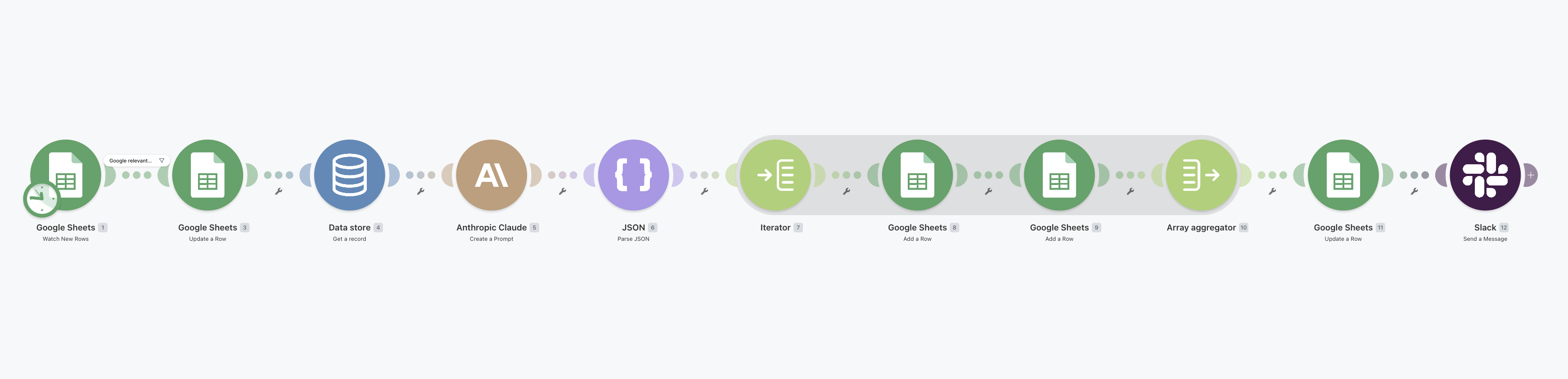

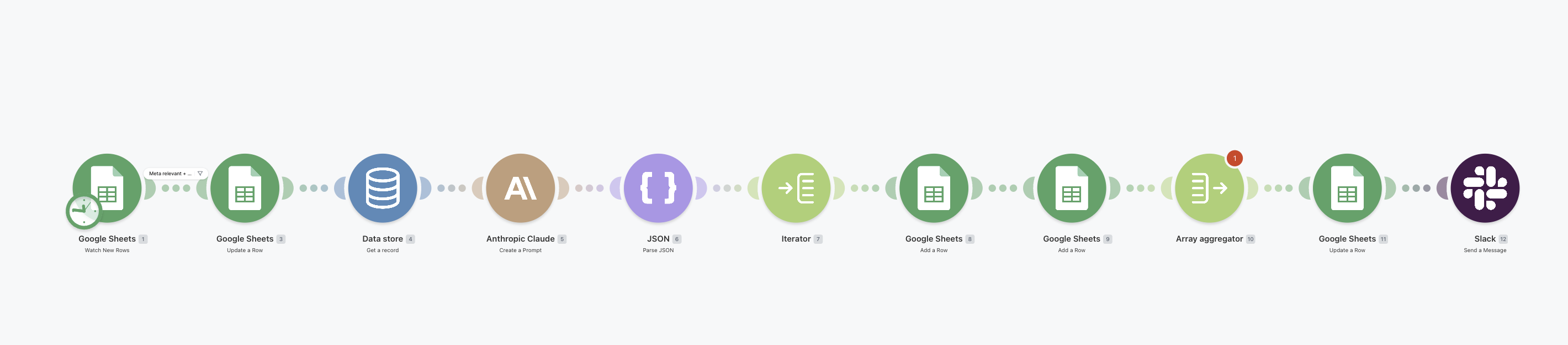

Phase 2 · Human in the loop

Live. Watched. Optimised.

Forever.

The gear flips here. In Phase 1, the AI did the work and the human approved. In Phase 2, the AI watches and proposes — and the human decides. Every action visible, every action reversible, every action logged.

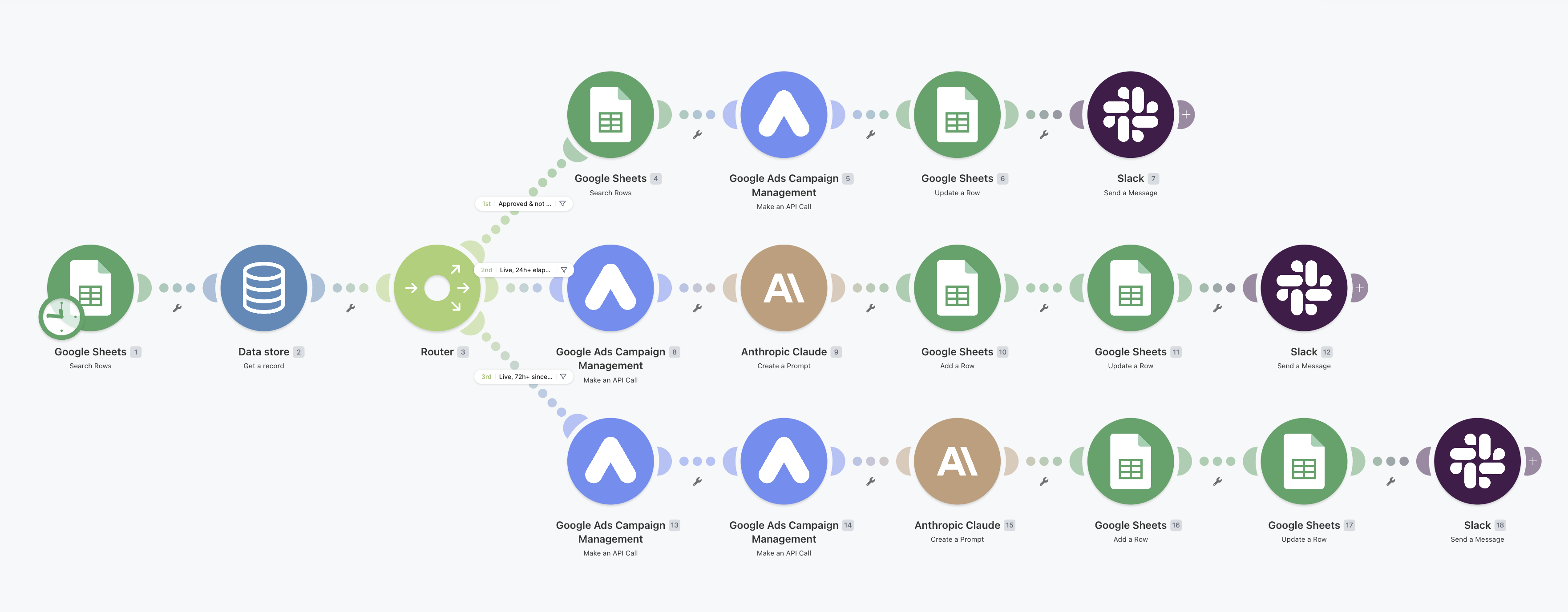

Once approved, the post-launch scenarios pick up the same row hourly and route it through one of three branches based on its current state. Build it. Diagnose it. Optimise it. Then keep cycling.

Branch A

Build

Reads strategy rows for the brief, creates campaigns paused in the Ads UI, marks the row Built, pings Slack. Human flips Live with a single click.

Branch B · 24h

Health Check

Pulls last-7-day performance, hands it to Claude for diagnosis against Benchmarks, logs the verdict to the OptimizationLog, alerts Slack only if something's outside tolerance.

Branch C · 72h

Optimise

Google: pulls last-7-day search terms → Claude proposes negative keywords. Meta: detects ad fatigue → Claude proposes 2-3 fresh angles. Human 👍 to apply.